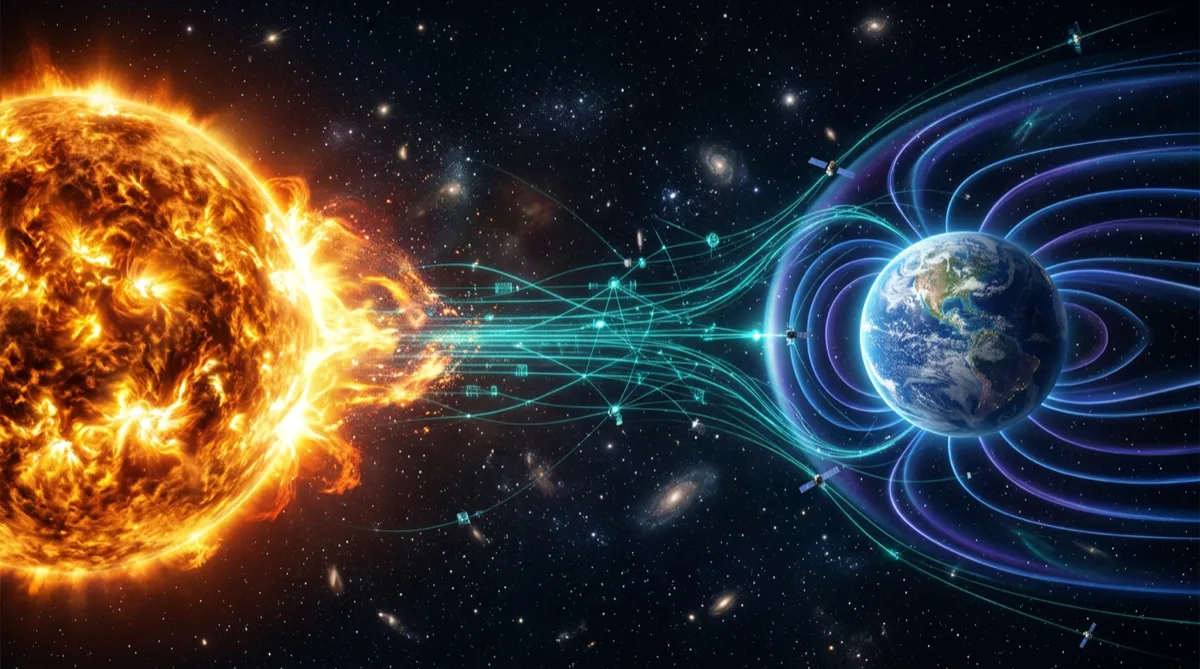

Space weather — the environmental conditions in space driven by solar activity — affects satellite operations, communications, GPS accuracy, power grid stability, and human spaceflight. Monitoring, modeling, and forecasting space weather requires engineering data systems that can ingest high-rate sensor data from multiple sources, process it in near-real-time, and deliver actionable products to operators and automated systems.

This article covers the engineering architecture for space weather data systems from a software and systems integration perspective.

What Space Weather Systems Monitor

Space weather data systems typically integrate multiple sensor types across different orbital regimes:

Solar X-ray flux: Monitored by GOES satellites (NOAA) to detect solar flares classified by peak X-ray flux (A/B/C/M/X classes). Flares affect HF radio communications and GPS ionospheric error corrections within minutes of occurrence.

Solar energetic particles (SEP): High-energy protons and electrons accelerated by solar events. SEP events degrade solar panel performance, increase radiation dose to electronics, and create single-event upset (SEU) risks for spacecraft.

Geomagnetic activity (Kp index): Derived from a global network of ground-based magnetometers. Geomagnetic storms affect low-Earth orbit satellite drag, polar orbit satellite charging, and power grid induction currents.

Total electron content (TEC): Derived from GPS receiver networks. Ionospheric scintillation and TEC gradients affect GPS positioning accuracy and timing — critical for precision navigation and weapon systems.

Solar wind parameters: Measured by spacecraft at the L1 Lagrange point (ACE, DSCOVR). L1 data provides ~30-60 minute advance warning of incoming solar wind conditions before they reach Earth.

Data Ingestion Architecture

Space weather data arrives from heterogeneous sources with different protocols, data formats, and latencies:

Sources:

├── NOAA GOES-R series (SEISS, EXIS, SUVI) — HTTP/HTTPS download, HRIT broadcast

├── NASA ACE/DSCOVR (L1 solar wind) — NOAA SWPC API feeds

├── Ground magnetometer networks (SuperMAG, INTERMAGNET) — IAGA-2002 format

├── GPS receiver networks — RINEX observation files

└── Observatory feeds — custom proprietary formats per institutionThe ingest layer must handle:

Multi-format parsing: FITS files for scientific data, NetCDF for gridded products, JSON APIs for real-time parameter feeds, binary telemetry for direct spacecraft data. Build a pluggable parser registry where each data source has a registered parser — new sources add a parser without modifying the core pipeline.

Data latency management: L1 solar wind data arrives with ~1-minute latency. GOES X-ray data arrives with ~5 second latency. Ground magnetometer data may arrive with 5-60 minute latency depending on the station's reporting protocol. The processing pipeline must handle asynchronous arrival and fill gaps with appropriate indicators rather than silently missing data.

Quality flagging: Raw sensor data contains quality flags indicating sensor status, calibration anomalies, and data gaps. The ingest layer must propagate quality flags through the processing pipeline so that derived products correctly communicate data confidence.

# Simplified data ingestion event structure

@dataclass

class SpaceWeatherObservation:

source: str # "GOES-18", "DSCOVR-L1", "SuperMAG-YKC"

parameter: str # "X-ray_flux_short", "Bz_GSM", "Kp_index"

timestamp: datetime # UTC timestamp of observation

value: float

units: str # "W/m^2", "nT", "unitless"

quality_flag: QualityFlag # GOOD, ESTIMATED, MISSING, BAD

latency_seconds: int # Seconds from observation to receiptReal-Time Processing Pipeline

Space weather processing pipelines are time-series intensive and often require windowed computations (running averages, threshold tracking over time, derivative calculations):

Alert threshold evaluation: X-ray flux monitors require continuous evaluation against NOAA alert thresholds. When the 1-minute average X-ray flux exceeds M5.0 class (5 × 10⁻⁵ W/m²), an alert is generated within the pipeline's processing latency — ideally under 30 seconds from observation.

def evaluate_xray_alert(

observation_stream: Iterator[SpaceWeatherObservation],

window_minutes: int = 1

) -> Iterator[AlertEvent]:

"""

Evaluate GOES X-ray flux against NOAA alert thresholds.

Generates AlertEvent when threshold exceeded.

"""

buffer: deque[SpaceWeatherObservation] = deque(maxlen=window_minutes * 60)

for obs in observation_stream:

if obs.quality_flag == QualityFlag.BAD:

continue

buffer.append(obs)

# Compute 1-minute average only when buffer is full

if len(buffer) == buffer.maxlen:

avg_flux = sum(o.value for o in buffer) / len(buffer)

flare_class = classify_xray_flux(avg_flux)

if flare_class >= FlareClass.M5:

yield AlertEvent(

alert_type="SOLAR_FLARE",

severity=flare_class,

onset_time=buffer[0].timestamp,

peak_flux=max(o.value for o in buffer),

data_source=obs.source

)Derived product generation: Raw observations feed derived products consumed by end users: Kp forecast, radiation belt electron flux maps, ionospheric TEC maps. Derived products have longer computation windows (minutes to hours) and run on scheduled intervals rather than per-observation.

State management: Storm tracking requires maintaining state across observations — a geomagnetic storm that began 6 hours ago is still ongoing if the Kp index remains above threshold. The pipeline must maintain storm state across system restarts, which requires persisting state to a durable store, not just in-memory buffers.

Data Storage Architecture

Space weather data has distinct storage tiers with different access patterns:

Hot tier (real-time, seconds to minutes): Incoming observations and current alert state. Implemented with Redis time-series or a streaming platform (Kinesis in GovCloud environments) for low-latency access by the alerting engine and real-time displays.

Warm tier (recent history, days to weeks): Used for running the pipeline's windowed computations and for recent event analysis. Amazon Timestream or PostgreSQL with TimescaleDB extension provides efficient time-series queries over recent data.

Cold tier (historical archive, years): Long-term scientific archive used for climatological analysis and model training. AWS S3 with appropriate partitioning (by source, parameter, year/month/day) and Parquet format enables efficient columnar queries via Athena. NOAA maintains public space weather data archives as a reference for historical comparison.

Alert Dissemination

Generated alerts must reach operators through multiple channels simultaneously:

- Email/SMS notifications: Direct alerts to human operators with configurable threshold filters

- Machine-readable feeds: JSON API endpoints for automated systems (satellite flight operations, power grid operators)

- Websocket streams: Real-time push to monitoring dashboard UIs without polling

- Message bus publication: Alert events published to internal message bus for downstream consumers (orbit propagation services that need to update atmospheric drag models, for example)

Alert dissemination reliability is as important as alert generation accuracy. A correctly generated alert that fails to reach recipients provides no value. Implement redundant delivery paths and alert delivery confirmation logging.

Integration with Satellite Operations

Space weather data systems don't operate in isolation — they feed operational systems:

Orbit propagation: Geomagnetic storm conditions increase upper atmosphere density, increasing drag on LEO satellites. Accurate atmospheric models require current and forecast Kp and F10.7 (solar flux) inputs. The ground system software architecture for satellite operations integrates space weather parameters into orbit determination loops.

Anomaly correlation: When a spacecraft reports an anomaly — unexpected attitude disturbance, elevated charging on solar panels, single-event upsets in onboard memory — correlating the anomaly timestamp with concurrent space weather events informs root cause analysis. Space weather data systems that maintain a queryable event log enable post-event reconstruction.

Operational safing: Some spacecraft have automated space weather safing modes triggered by radiation environment thresholds. The commanding system described in our on-orbit software update architecture can receive space weather alert feeds and delay planned software uploads during elevated radiation conditions.

Frequently Asked Questions

What are the primary data sources for real-time space weather monitoring?

The primary real-time sources include NOAA's Geostationary Operational Environmental Satellites (GOES-R series) for solar X-ray and particle data, NASA/NOAA's DSCOVR spacecraft at L1 for solar wind parameters (Bz component is critical for geomagnetic storm prediction), the USGS ground magnetometer network for geomagnetic activity, and GPS receiver networks for ionospheric TEC. NOAA's Space Weather Prediction Center (SWPC) integrates these sources and provides public API access to derived products and alerts.

How much latency is acceptable in a space weather alerting system?

Acceptable latency depends on the operational use case. HF radio operators and airline dispatchers routing polar flights need solar flare X-ray alerts within 5-10 minutes of flare onset — the radio blackout occurs within minutes of the X-ray peak. Geomagnetic storm alerts from L1 solar wind data (DSCOVR) provide 30-60 minute advance warning of storm onset at Earth — alerting within 5 minutes of L1 data receipt is appropriate. Satellite conjunction assessment for elevated atmospheric drag can tolerate 1-3 hour latency since the drag effect accumulates over many orbits.

How do space weather data systems handle the solar cycle?

The 11-year solar cycle drives significant variation in space weather activity. Solar maximum periods (like 2024-2025) produce far more frequent major events than solar minimum periods. Software systems should be designed and load-tested for solar maximum conditions — alert generation rates, storage write throughput, and notification volumes that are acceptable at solar minimum may be overwhelmed at solar maximum. Use historical solar maximum event data (October 2003 Halloween storms, September 2017 events) as stress test scenarios.

What classification level do space weather data systems typically operate at?

Most operational space weather data is unclassified — NOAA's SWPC products are publicly available. However, space weather monitoring systems integrated into military satellite operations, missile defense, or intelligence programs may handle classified operational data about which satellites are affected and how. The space weather sensor data itself remains unclassified; the system context (which assets are affected and what operational impacts resulted) may be classified.

How does machine learning integrate with space weather forecasting systems?

ML models are increasingly used for solar flare prediction (predicting flare probability and class from active region imagery) and geomagnetic storm prediction (estimating Kp 1-3 hours in advance from L1 solar wind data). Production ML integration requires a model serving infrastructure that can deliver predictions with latency under 30 seconds, handles model version updates without alert service interruption, and logs model predictions alongside actual outcomes for continuous evaluation. The MLOps patterns for government systems covered separately apply to space weather ML pipelines operating in FedRAMP or government cloud environments.

Rutagon builds data systems for aerospace and defense programs →

Discuss your project with Rutagon

Contact Us →