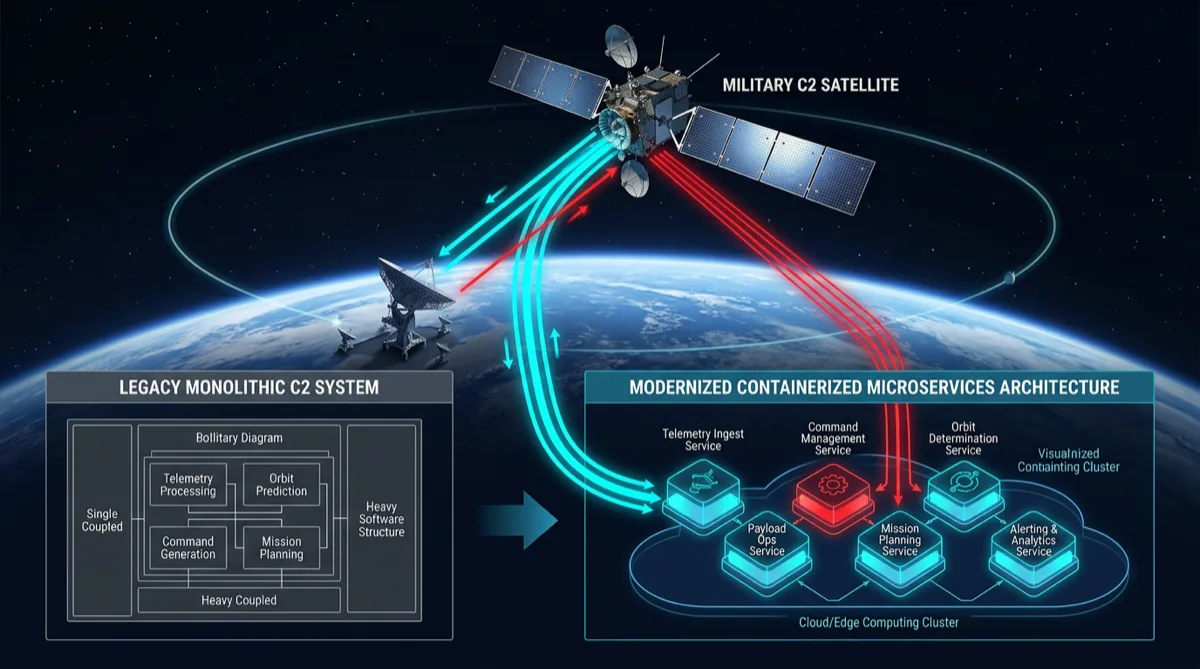

The generation of satellite ground systems deployed from the late 1990s through the 2010s was built as monolithic applications — single codebases handling telemetry ingest, command uplink, state management, and visualization. These systems work, but they create brittleness: a defect in the visualization layer can take down telemetry processing. Scaling one component requires scaling the entire application. Adding a new sensor or command set requires modifying a deeply coupled codebase.

Re-architecting satellite C2 into containerized microservices doesn't just modernize the deployment model — it creates the decomposed, independently testable architecture that modern cATO (continuous Authorization to Operate) depends on.

Core Decomposition: What Becomes a Service

The first architectural decision in C2 modernization is service boundary definition. Ground systems typically decompose into these discrete capability domains:

Telemetry Ingest Service: Receives raw telemetry frames from ground stations or relay networks. Handles protocol translation, frame decommutation, and publication to the data bus. Stateless per frame; scales horizontally with contact windows.

State Management Service: Maintains current spacecraft state — last known position, subsystem health, mode, anomaly flags. Provides the authoritative state read for other services. Persistence-backed; consistency requirements vary by mission criticality.

Command Uplink Service: Manages the command queue, commanding authority (who can send what), command history, and uplink scheduling. High correctness requirements — a command service bug with production consequences is the highest-risk component.

Ephemeris and Trajectory Service: Maintains orbital element sets, propagates trajectories, generates contact windows and look angles. Computationally independent from real-time operations; results are cache-friendly.

Visualization and Dashboard Service: Consumes state and telemetry from the data bus; presents to human operators. Stateless relative to the spacecraft; can be restarted without affecting operations.

Contact Management Service: Schedules and manages ground station contacts, prioritizes contact windows across multiple missions, coordinates with scheduling systems.

This decomposition means the command uplink service and the visualization service can be deployed, updated, and scaled independently. An update to the dashboard doesn't require a maintenance window that takes down telemetry processing.

Communication Patterns for Real-Time Ground Systems

Ground systems have strict latency requirements on the uplink path (low latency for command) but can tolerate eventual consistency on the monitoring path (telemetry processing can pipeline).

Rutagon's standard communication pattern for C2 microservices:

Command path: Synchronous gRPC for command submission and status — immediate acknowledgment is required for operator confidence. gRPC's bidirectional streaming is used for command execution status updates (queued → validated → uplinked → acknowledged by spacecraft).

Telemetry path: Event-driven via Amazon Kinesis (GovCloud-available). Telemetry frames are published to Kinesis streams; the state management service, visualization service, and ConMon service all consume independently without coupling.

Contact scheduling: Asynchronous messaging via SQS for contact scheduling requests and updates — decoupled from real-time processing, tolerates delays.

Service mesh: Istio mTLS for all intra-cluster communication. See our coverage of Istio service mesh for government applications for the compliance detail.

Container Build and Iron Bank Alignment

Satellite C2 software for DoD programs runs on Iron Bank base images. The Iron Bank hardening process includes vulnerability scanning, CIS benchmark compliance, and STIG application — producing a container base that enters the program's ATO boundary in a compliant state.

For C2 microservices, this means:

- Base image from Iron Bank (Python, Java, or Golang depending on service)

- Application dependencies added in a separate layer with pinned versions

- No

apt-get updateor dynamic package installation at runtime - Non-root container user

- Read-only filesystem where possible (telemetry ingest and state services)

- Container scanning with Trivy on every build, results published to the ConMon dashboard

Container images are signed with AWS Signer before deployment. EKS admission webhooks verify image signature before any pod starts — unsigned images are rejected at the cluster level.

cATO Architecture for C2 Systems

Continuous ATO (cATO) means the system maintains authorization through ongoing evidence rather than periodic re-authorization cycles. For a satellite C2 system, cATO requires:

Change management integration: Every code commit triggers a pipeline that runs STIG scans, dependency vulnerability checks, and compliance policy tests. Failed checks block the deployment. The pipeline generates signed evidence artifacts for each passing check.

Infrastructure drift detection: AWS Config rules continuously monitor the C2 system's GovCloud infrastructure against the authorized baseline. Drift triggers a ConMon alert. Configuration changes are tracked in CloudTrail with IAM principal attribution.

Penetration test scheduling: cATO doesn't eliminate penetration testing — it integrates it on a continuous schedule. Quarterly automated penetration testing with results feeding the ATO evidence package, plus annual red team assessment.

AO engagement: cATO depends on the Authorizing Official (AO) being engaged with the continuous evidence stream rather than reviewing a point-in-time package. Rutagon builds AO dashboards that surface the current compliance posture — open POA&M items, recent scan results, upcoming control review dates — so the AO can maintain authorization with appropriate visibility.

GovCloud Deployment Architecture

The C2 microservices run on Amazon EKS in a dedicated GovCloud VPC:

- Multi-AZ EKS cluster with private API server endpoint

- Node groups with instance metadata service v2 (IMDSv2) enforced

- Cluster logging to CloudWatch (API server, audit, authenticator, controller manager)

- Namespace isolation per service with NetworkPolicy restrictions

- Ground station connectivity via AWS Direct Connect or AWS Direct Connect + Site-to-Site VPN for on-premise antenna systems

Ground system programs operating in GovCloud or evaluating cloud modernization for legacy C2 systems can reach Rutagon at contact@rutagon.com.

Related: Defense Microservices Architecture for Subcontractors | GitOps for Federal Government Deployments | Continuous ATO Automation

Frequently Asked Questions

Why decompose satellite C2 into microservices?

Monolithic C2 systems create coupling between components with different availability, scaling, and update requirements. A defect in the visualization layer should not take down telemetry processing. Microservice decomposition allows independent deployment, independent scaling, and independent failure domain — critical for high-availability ground systems. It also aligns with cATO requirements by making the system's components individually auditable and deployable.

How do you handle the real-time requirements of telemetry processing in microservices?

Telemetry ingest is structured as a stateless, horizontally scalable service that publishes frames to Kinesis. Downstream consumers (state management, visualization, ConMon integration) process independently. This decoupling means telemetry processing throughput scales with the Kinesis shard count rather than being limited by other services. For contact windows with burst ingest rates, the Kinesis buffer absorbs the burst while downstream services process at their natural rate.

What is cATO and why does it matter for satellite ground systems?

Continuous Authorization to Operate (cATO) is a DoD concept where systems maintain ATO through ongoing evidence of security controls rather than periodic reauthorization packages. For satellite ground systems that receive frequent software updates and may operate under aggressive release schedules, cATO eliminates the bottleneck of re-authorization reviews for each change. It requires an engineering approach where compliance evidence is generated automatically by the deployment pipeline.

Can legacy satellite C2 software be migrated to microservices without full rewrite?

Yes, with a strangler-fig approach. The monolith remains in service while individual capability domains are extracted one at a time into microservices. Each extracted service connects to the legacy system via a defined interface until all capabilities are extracted. This eliminates the risk of a "big bang" migration while making steady progress toward the target architecture. See our legacy defense system modernization coverage for detailed patterns.

What Iron Bank images are available for satellite ground system services?

Iron Bank maintains hardened base images for common runtime environments: Red Hat UBI, Python, Java (OpenJDK), Golang, Node.js, and others. Platform One's catalog is the authoritative source for currently approved images and versions. Programs should pin specific Iron Bank image digests rather than tags to prevent unexpected base image changes during builds.