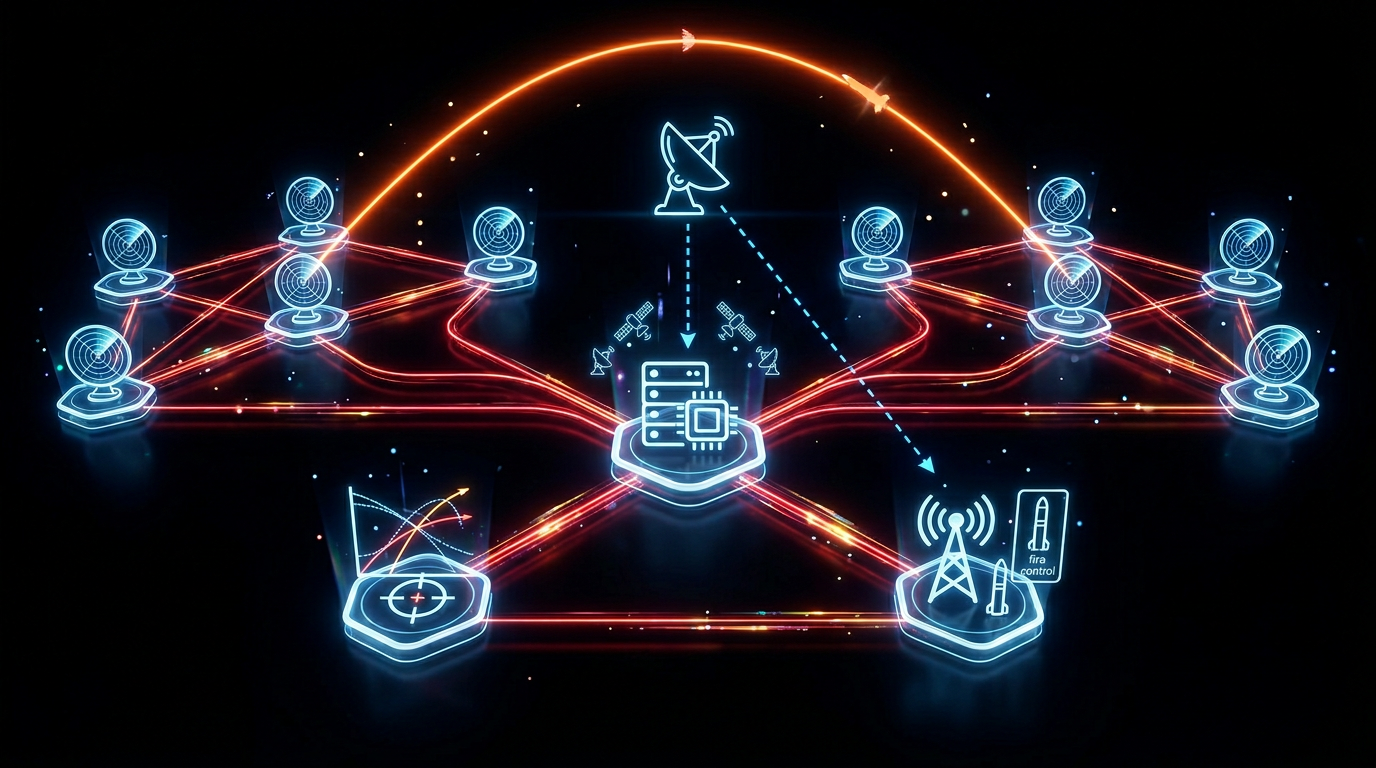

Missile defense systems are among the most demanding software environments in existence: high-volume, heterogeneous sensor data arriving in real time, strict latency requirements on correlation and alerting, fault-tolerance requirements that leave no margin for single points of failure, and security classification boundaries that govern exactly what data can flow where and who can access it.

The software engineering patterns that support these systems are not exotic — they are principled applications of distributed systems design applied to an unforgiving operational context. This article covers the architectural patterns at a level appropriate for publicly available engineering discussion, focused on what the software does, not what the systems it supports can do.

Real-Time Data Pipeline Architecture

The foundational challenge in defense data systems is ingesting high-volume sensor streams, normalizing heterogeneous data formats, correlating events across sources, and producing actionable outputs — all within strict latency windows.

Stream ingestion layer. Sensor data arrives from multiple sources with different formats, rates, and reliability characteristics. A Kafka-based ingestion layer on GovCloud (using Amazon MSK for managed Kafka) provides ordered, durable ingestion with configurable retention. Each sensor source maps to a dedicated topic with schema enforcement at the producer side using a Schema Registry.

// Sensor event schema (simplified, illustrative)

interface SensorEvent {

sensorId: string;

sensorType: 'RADAR' | 'OPTICAL' | 'TELEMETRY';

timestamp: number; // Unix milliseconds

trackId: string;

position: { lat: number; lon: number; alt: number };

confidence: number; // 0.0–1.0

classification: 'U' | 'CUI';

}Correlation and fusion layer. Multiple sensors reporting on the same object must be correlated into a unified track. This requires track association logic — matching incoming events to existing tracks based on position proximity, temporal consistency, and sensor type weighting. Lambda-based consumers process events in micro-batches with DynamoDB as the track state store. DynamoDB's conditional writes provide optimistic locking that prevents race conditions when multiple consumers update the same track simultaneously.

Output and alerting layer. Processed track data feeds downstream systems through event-driven outputs — SNS topics fan out to subscribed consumers (visualization systems, alert management, data logging). Thresholds triggering alerts are configuration-driven and hot-reloadable from Parameter Store, avoiding redeployments to change operational parameters.

Fault-Tolerant C2 Software Design

Command and control software must remain operational when individual components fail. The design principles Rutagon applies:

No single point of failure. Every critical component runs across multiple availability zones in GovCloud with automatic failover. ECS services with minimum healthy percent configured above 100% ensure zero-downtime deployments and instance failures do not reduce capacity below operational threshold.

Circuit breakers. Downstream service failures must not cascade. Circuit breaker patterns (implemented via Resilience4j in Java services or custom middleware in TypeScript) prevent a failing downstream component from exhausting thread pools or connection limits in upstream services.

Graceful degradation. When correlation confidence falls below a threshold — due to sensor unavailability, communication latency, or data quality issues — the system must degrade gracefully to the highest confidence subset of data rather than failing silently or halting. This requires explicit degraded-mode logic, not just error handling.

Heartbeat and health monitoring. Every service component publishes health metrics to CloudWatch at configured intervals. A health monitor service tracks component state and triggers automated runbooks (SSM Documents) for known failure modes — restarting a container, draining a connection pool, flushing a stuck queue consumer.

Multi-Classification Data Handling

Defense systems frequently process data at multiple classification levels. The architecture must enforce boundaries between classification levels at the infrastructure layer — not just the application layer.

Physical boundary enforcement. IL4 and IL5 data operate in separate VPCs with no direct routing between them. Cross-domain data movement (from IL2 to IL4, for example) passes through a controlled cross-domain solution — never through application-layer logic that could be bypassed.

Data tagging at ingestion. Every record entering the system is tagged with its classification level at the point of ingest. Tags are immutable and enforced through IAM resource policies and Lake Formation controls. An IL2-authorized consumer cannot retrieve a record tagged CUI regardless of application-layer access control.

Audit trail. Every data access is logged to CloudTrail and forwarded to a SIEM. For classified data, access logging must capture who accessed what, when, from which system, and on whose authority — satisfying both FISMA continuous monitoring requirements and operational security requirements.

For detailed IL4/IL5 boundary architecture, see IL4/IL5 Cloud Architecture for DoD Systems. For cross-domain CUI handling patterns, see CUI Cloud Enclave Architecture on AWS GovCloud.

DevSecOps for Mission-Critical Defense Software

Software that cannot fail operationally requires a deployment pipeline that never ships broken code to production. Rutagon's defense software pipelines include:

- Automated integration tests covering all sensor event types, including edge cases and malformed inputs

- Chaos engineering tests validating graceful degradation — running in a dedicated test environment that mirrors production architecture

- Performance regression gates — deployments that exceed baseline latency thresholds are automatically rejected before reaching production

- STIG compliance scanning on every container image before deployment, using Trivy with custom policies aligned to DISA SRG controls

- Signed artifacts — every container image is signed with Cosign and verified at deployment time; unsigned images are rejected by OPA/Gatekeeper admission policies

For the full DevSecOps pipeline stack, see DoD Software Factory: The DevSecOps Stack.

Working With Rutagon

Rutagon builds and maintains cloud-native software for defense programs — real-time data pipelines, fault-tolerant C2 systems, and DevSecOps infrastructure that keeps mission-critical software deployable without sacrificing compliance.

Frequently Asked Questions

What software engineering challenges are unique to missile defense systems?

Mission-critical defense data systems require simultaneous satisfaction of strict latency (real-time processing within defined windows), high availability (no single points of failure, automatic failover), multi-classification data handling (enforced at the infrastructure layer), and rigorous security compliance (STIG, NIST 800-53, continuous monitoring). These constraints must coexist — a design optimized for latency that creates a security boundary gap is not acceptable. This combination eliminates many common commercial software shortcuts.

What cloud infrastructure supports defense data systems?

AWS GovCloud (US-East and US-West regions) provides the impact level authorization required for CUI and higher classification workloads. Key services include Amazon MSK (managed Kafka for stream ingestion), DynamoDB (low-latency track state), ECS/EKS (containerized processing), Lambda (event-driven correlation), CloudWatch (health monitoring), and Lake Formation (classification-aware access control). GovCloud's physical separation from commercial AWS regions and US-person-only access requirements satisfy DoD IL4/IL5 authorization requirements.

How do defense software systems handle sensor data from multiple sources?

Multi-source sensor fusion uses a track correlation layer that ingests events from heterogeneous sources, normalizes them to a common data model, and associates incoming events with existing tracks using configurable association logic (position proximity, temporal consistency, sensor weighting). Track state is maintained in a low-latency data store (DynamoDB or equivalent) with optimistic locking to handle concurrent updates from multiple consumer processes. The correlation layer outputs unified track objects to downstream consumers via an event bus.

What does fault tolerance mean in a defense software context?

Fault tolerance in defense software means the system continues operating at defined capability levels when individual components fail — and fails gracefully (degrading to highest-confidence-available data) rather than halting when degraded conditions exceed design parameters. This requires multi-AZ deployment, circuit breakers on all downstream dependencies, explicit degraded-mode logic, automated recovery runbooks, and continuous health monitoring that triggers automated remediation before manual intervention is required.

How is classified data handled in cloud-native defense systems?

Classification boundaries are enforced at the infrastructure layer, not the application layer. Separate VPCs for each impact level with no direct routing between them. Cross-domain data movement goes through a controlled cross-domain solution. Every data record is tagged with its classification at ingestion, and tags are enforced through IAM and Lake Formation policies that cannot be bypassed through application code. All data access is logged to an immutable audit trail meeting FISMA continuous monitoring requirements.