Service meshes solve a class of problems that become unavoidable at production scale in government cloud: how do you enforce zero trust between microservices, collect the telemetry needed for ATO continuous monitoring, and apply consistent traffic policies — without embedding that logic in application code?

Istio answers all three. This covers how Rutagon deploys and configures Istio in government-facing Kubernetes environments on AWS GovCloud, with specific attention to the compliance behaviors that matter for FedRAMP and CMMC programs.

Why Service Meshes Matter for Government Workloads

NIST SP 800-204C establishes guidance for microservice-based system security, and the DoD Zero Trust Reference Architecture (2022) identifies service-to-service authentication as a core control. Both documents point toward the same architectural pattern: mutual TLS (mTLS) between all services, enforced at the infrastructure layer rather than in application code.

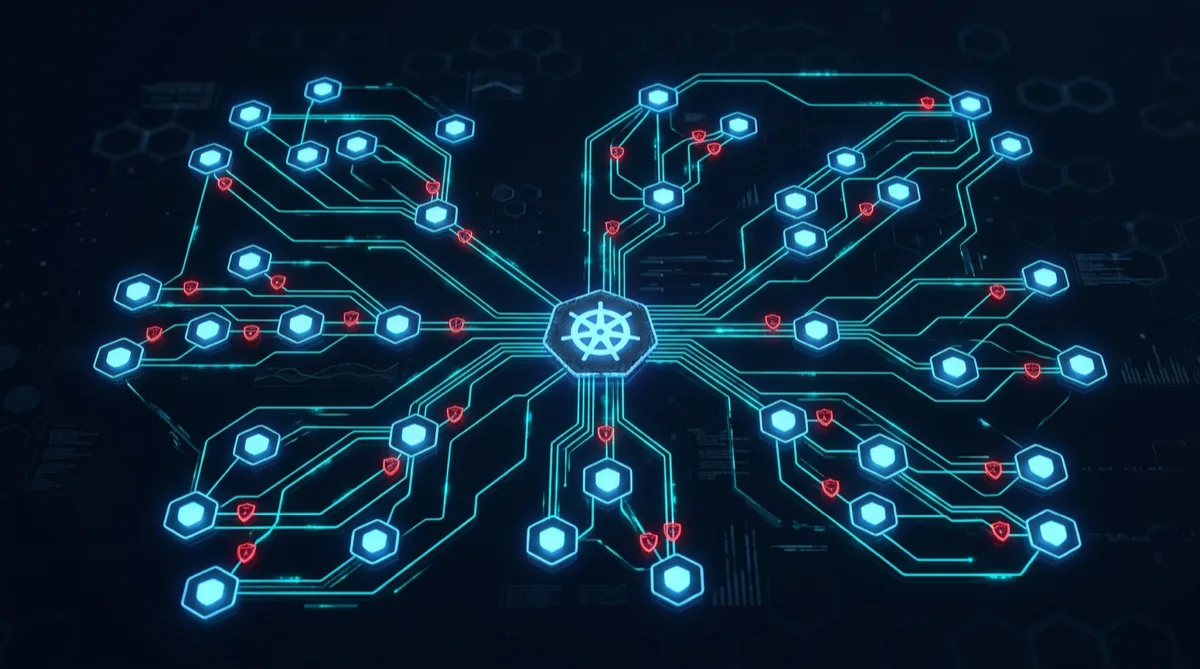

In a traditional deployment, a microservice trusts any other service on the same network segment. In a zero trust architecture, trust is never implicit — every service-to-service call must be authenticated, authorized, and encrypted. Istio implements this as a sidecar proxy (Envoy) injected into each pod, intercepting all traffic before it reaches the application container.

The compliance benefit: you implement zero trust controls once at the mesh layer, rather than rebuilding them in every microservice.

mTLS Enforcement in GovCloud

The starting point for any government Istio deployment is strict mTLS enforcement across the mesh. In Istio, this is controlled by PeerAuthentication and DestinationRule resources.

Rutagon's baseline configuration for government programs:

apiVersion: security.istio.io/v1beta1

kind: PeerAuthentication

metadata:

name: default

namespace: production

spec:

mtls:

mode: STRICTSTRICT mode means all intra-mesh traffic must use mTLS — no plaintext connections accepted. Services that haven't been onboarded to the mesh (legacy or external) are handled through explicit DestinationRule policies that specify DISABLE mode only for those specific endpoints, with all other traffic remaining strict.

Certificates are managed by Istio's built-in CA (Citadel), which issues short-lived X.509 certificates to each service account. Certificate rotation happens automatically, eliminating the operational overhead of manually managing service certificates — and the certificate audit trail provides evidence for NIST AC-17 (Remote Access) and IA-3 (Device Identification and Authentication) controls.

Authorization Policies for Least-Privilege Service Access

mTLS authenticates — it doesn't authorize. Istio AuthorizationPolicy resources define what authenticated services are permitted to do, implementing the principle of least privilege at the service-to-service layer.

A production authorization policy for a data ingestion service:

apiVersion: security.istio.io/v1beta1

kind: AuthorizationPolicy

metadata:

name: allow-ingest-only

namespace: production

spec:

selector:

matchLabels:

app: data-ingest

rules:

- from:

- source:

principals:

- "cluster.local/ns/production/sa/pipeline-orchestrator"

to:

- operation:

methods: ["POST"]

paths: ["/api/v1/ingest"]This policy permits only the pipeline-orchestrator service account to POST to the ingest endpoint — all other traffic is denied by default. Every service in the mesh carries a corresponding policy, making the authorization matrix visible and auditable in Kubernetes manifests rather than scattered across application code.

For FedRAMP AC-3 (Access Enforcement) and AC-6 (Least Privilege), the AuthorizationPolicy manifest set constitutes machine-readable policy documentation — a significant advantage over informal network ACL descriptions.

Traffic Observability for Continuous Monitoring

Istio's sidecars expose detailed telemetry without any application code changes: request rates, error rates, latencies, and circuit-breaker states across every service pair in the mesh.

Rutagon connects this telemetry to ConMon infrastructure through a standard pipeline:

- Prometheus scrapes Istio metrics from the mesh (control plane and data plane)

- Grafana dashboards provide human-readable service health views for program managers and AO staff

- Kiali provides a service topology map — visualization of what's calling what, with real-time traffic rates and error indicators

- Distributed tracing (Jaeger or AWS X-Ray) captures request traces across service boundaries for incident investigation

The continuous monitoring implication: when an anomaly occurs — unexpected error spike, unusual traffic pattern between services — the mesh telemetry provides the evidence needed for the 72-hour DFARS cyber incident reporting requirement. Without a mesh, correlating traffic patterns across individual pod logs is time-intensive. With Istio telemetry, the service interaction data is already aggregated and queryable.

Traffic Management for GovCloud Deployments

Istio's traffic management capabilities support deployment patterns required by government programs:

Canary deployments: Route 5% of traffic to a new service version before full rollout — critical for programs where failed deployments trigger ATO re-review. Rollback is a single manifest change, not a redeployment.

Circuit breakers: Prevent cascading failure when a downstream service degrades. Configurable in DestinationRule resources with outlier detection thresholds.

Retry and timeout policies: Standardized across the mesh without requiring each service to implement its own retry logic. Reduces partial failure modes that complicate incident reconstruction.

Traffic mirroring: Shadow production traffic to a new service version for observability before routing real users to it — useful for testing security controls in IL4/IL5 environments where production-equivalent data can't easily be replicated in test environments.

Hardening Istio for Government Programs

A production-ready government Istio deployment requires several hardening steps beyond default installation:

Control plane isolation: The Istio control plane (istiod) runs in a dedicated namespace with strict RBAC and network policies preventing workloads from accessing it directly.

Ingress gateway hardening: The Istio ingress gateway terminates external TLS, enforces minimum TLS version (1.2+, TLS 1.3 preferred for new programs), and applies WAF-equivalent policies through Envoy filters.

Sidecar resource limits: In GovCloud environments with GPU-constrained node pools, sidecar CPU and memory limits prevent proxy resource contention with application containers.

Ambient mode evaluation: Istio's ambient mode (graduated to stable in Istio 1.21) eliminates sidecars in favor of a ztunnel DaemonSet, reducing per-pod overhead. Rutagon evaluates ambient mode on new programs where the simplified architecture aligns with the program's threat model and compliance requirements.

Government programs running containerized workloads in AWS GovCloud can reach Rutagon at contact@rutagon.com to discuss service mesh architecture for their specific ATO environment.

Frequently Asked Questions

Why use Istio instead of AWS App Mesh for government workloads?

AWS App Mesh is tightly integrated with AWS services but is less feature-complete than Istio for policy-driven zero trust architectures. Istio's AuthorizationPolicy and PeerAuthentication primitives map directly to NIST and DoD zero trust controls with machine-readable policy artifacts. For programs requiring portability across IL environments or multi-cloud deployments, Istio also avoids AWS-specific lock-in. Rutagon uses Istio for programs where compliance policy expressiveness and portability matter.

Does Istio satisfy DoD Zero Trust requirements?

Istio addresses several DoD ZTA pillars: device-to-device authentication via mTLS, user-to-service authorization via AuthorizationPolicy, and network segmentation via namespace-level traffic isolation. It doesn't address identity pillar controls (user authentication and federation — handled by OIDC/CAC integrations) or data pillar controls (handled at the storage and application layer). Istio is part of a ZTA implementation, not a complete solution.

What is the performance overhead of Istio in GovCloud Kubernetes?

Istio's Envoy sidecar adds approximately 3–5ms of latency per hop and requires 100–150m CPU and 128–256Mi memory per pod. For most government workload profiles, this overhead is acceptable. Programs with strict latency requirements (real-time telemetry, time-critical data pipelines) evaluate ambient mode or selective sidecar injection for high-throughput paths.

How does Istio support FedRAMP continuous monitoring?

Istio's service metrics (request rates, error rates, latencies) feed directly into Prometheus-based ConMon dashboards. The distributed tracing integration (Jaeger, X-Ray) supports incident reconstruction. AuthorizationPolicy manifests provide machine-readable evidence for AC-3 and AC-6 control documentation. Together, these artifacts support FedRAMP's continuous monitoring deliverables without additional instrumentation.

Can Istio be used in AWS GovCloud (US) regions?

Yes. Istio runs on any CNCF-conformant Kubernetes cluster, including Amazon EKS in GovCloud (us-gov-east-1, us-gov-west-1). AWS EKS Managed Node Groups, custom AMIs, and GovCloud-specific networking (VPC, PrivateLink) are all compatible with Istio deployment. Rutagon has deployed Istio-based service meshes in GovCloud EKS environments for IL2 and IL4 programs.