Zero-downtime database migration is one of the hardest problems in production systems engineering. Schema changes that seem trivial in development — adding a column, renaming a table, migrating to a new engine — become high-risk operations when production traffic is flowing and SLAs demand 99.99% availability. At Rutagon, we've shipped database migrations across government and commercial systems without a single minute of planned downtime, using patterns that treat data integrity and availability as non-negotiable constraints.

This article covers the strategies we deploy: expand-contract migrations, blue-green database patterns, dual-write architectures, and the operational tooling that makes zero-downtime migrations repeatable rather than heroic.

The Problem with Traditional Migrations

Traditional migration workflows follow a simple sequence: stop the application, run the migration script, start the application. This "big bang" approach works for internal tools with maintenance windows, but fails for systems where downtime has real consequences — citizen-facing government portals, real-time telemetry dashboards, or production SaaS platforms processing continuous transactions.

The risks compound with scale:

- Lock contention:

ALTER TABLEon a 500GB PostgreSQL table acquires anACCESS EXCLUSIVElock, blocking all reads and writes until the operation completes. - Rollback complexity: Once a destructive migration runs (dropping columns, changing types), rollback requires restoring from backup.

- Coupled deployments: Application code and schema changes must deploy simultaneously, creating a window where either the old code hits the new schema or vice versa.

Government systems operating under FedRAMP continuous monitoring requirements can't afford scheduled downtime for routine schema changes. The architecture must support migration as a normal operational activity.

Expand-Contract: The Foundation Pattern

Every zero-downtime migration follows the expand-contract pattern, even when the specific implementation varies. The phases:

- Expand: Add the new schema elements alongside the existing ones. Both old and new application versions work against the expanded schema.

- Migrate: Backfill data from the old structure to the new one while the application runs.

- Contract: Remove the old schema elements once all application versions use the new structure.

Each phase deploys independently. If phase two reveals a data quality issue, phase one hasn't broken anything — the old columns and tables still exist and function.

Example: Renaming a Column

Renaming user_email to email_address without downtime:

-- Phase 1: Expand — add new column, backfill, add trigger

ALTER TABLE users ADD COLUMN email_address VARCHAR(255);

UPDATE users SET email_address = user_email WHERE email_address IS NULL;

CREATE OR REPLACE FUNCTION sync_email_columns()

RETURNS TRIGGER AS $$

BEGIN

IF TG_OP = 'INSERT' OR NEW.user_email IS DISTINCT FROM OLD.user_email THEN

NEW.email_address := NEW.user_email;

END IF;

IF TG_OP = 'INSERT' OR NEW.email_address IS DISTINCT FROM OLD.email_address THEN

NEW.user_email := NEW.email_address;

END IF;

RETURN NEW;

END;

$$ LANGUAGE plpgsql;

CREATE TRIGGER trg_sync_email

BEFORE INSERT OR UPDATE ON users

FOR EACH ROW EXECUTE FUNCTION sync_email_columns();-- Phase 2: Deploy application code reading from email_address

-- Phase 3: Contract — remove old column and trigger

DROP TRIGGER trg_sync_email ON users;

DROP FUNCTION sync_email_columns();

ALTER TABLE users DROP COLUMN user_email;The trigger keeps both columns synchronized during the transition. Application versions reading user_email or email_address both get correct data.

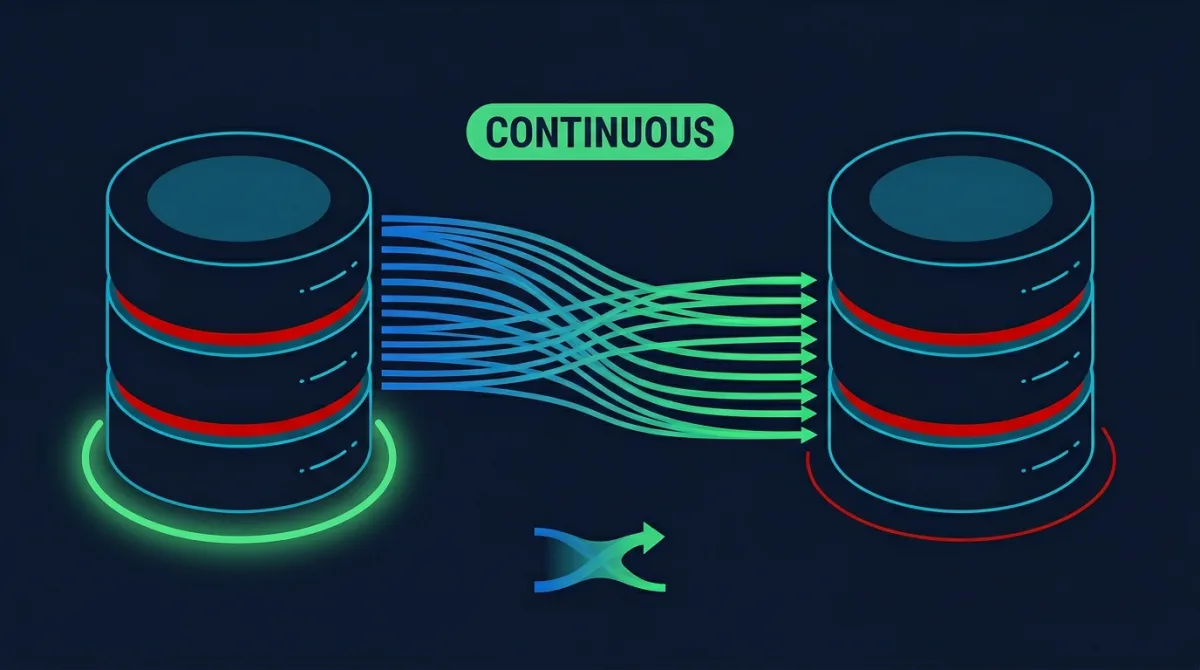

Blue-Green Database Migrations

For engine migrations — moving from MySQL to PostgreSQL, or from self-managed RDS to Aurora Serverless v2 — the blue-green pattern operates at the database level:

┌─────────────┐ ┌──────────────────┐

│ Application │────▶│ Blue DB (current) │

│ Fleet │ └──────────────────┘

│ │ │ replication

│ │ ┌──────────────────┐

│ │╌╌╌▶ │ Green DB (target) │

└─────────────┘ └──────────────────┘Implementation with AWS DMS

AWS Database Migration Service handles continuous replication from blue to green:

resource "aws_dms_replication_task" "blue_to_green" {

replication_task_id = "blue-green-migration"

source_endpoint_arn = aws_dms_endpoint.blue_source.arn

target_endpoint_arn = aws_dms_endpoint.green_target.arn

migration_type = "full-load-and-cdc"

replication_task_settings = jsonencode({

TargetMetadata = {

ParallelLoadThreads = 8

BatchApplyEnabled = true

}

FullLoadSettings = {

TargetTablePrepMode = "TRUNCATE_BEFORE_LOAD"

}

ChangeProcessingTuning = {

BatchApplyPreserveTransaction = true

}

})

table_mappings = jsonencode({

rules = [{

rule-type = "selection"

rule-id = "1"

rule-name = "migrate-all"

object-locator = {

schema-name = "public"

table-name = "%"

}

rule-action = "include"

}]

})

}The full-load-and-cdc migration type performs an initial full copy, then switches to change data capture (CDC) for ongoing replication. Once replication lag drops to zero, the cutover is a DNS change or connection string update — typically under one second of effective downtime.

Validation Before Cutover

We never cut over without automated validation:

def validate_migration(blue_conn, green_conn, tables: list[str]) -> bool:

for table in tables:

blue_count = blue_conn.execute(f"SELECT COUNT(*) FROM {table}").scalar()

green_count = green_conn.execute(f"SELECT COUNT(*) FROM {table}").scalar()

if blue_count != green_count:

logger.error(f"Row count mismatch on {table}: {blue_count} vs {green_count}")

return False

blue_hash = blue_conn.execute(

f"SELECT MD5(STRING_AGG(t::text, '')) FROM {table} t ORDER BY id"

).scalar()

green_hash = green_conn.execute(

f"SELECT MD5(STRING_AGG(t::text, '')) FROM {table} t ORDER BY id"

).scalar()

if blue_hash != green_hash:

logger.error(f"Checksum mismatch on {table}")

return False

return TrueDual-Write for Gradual Migration

When migrating between data stores with different models — relational to document, SQL to DynamoDB — dual-write patterns allow incremental migration without a hard cutover:

class DualWriteRepository:

def __init__(self, primary_db, secondary_db, read_from: str = "primary"):

self.primary = primary_db

self.secondary = secondary_db

self.read_from = read_from

async def create(self, entity: dict) -> str:

primary_result = await self.primary.insert(entity)

try:

await self.secondary.insert(entity)

except Exception as e:

logger.warning(f"Secondary write failed: {e}")

await self._queue_for_reconciliation(entity)

return primary_result.id

async def read(self, entity_id: str) -> dict:

if self.read_from == "primary":

return await self.primary.get(entity_id)

return await self.secondary.get(entity_id)The read_from flag controls which store serves reads. Start with primary, flip to secondary behind a feature flag, monitor for inconsistencies, then decommission the primary once confidence is established.

This is the same pattern we used when migrating a production SaaS platform's core data layer — writes flowed to both stores for two weeks before we fully committed to the new architecture. The cloud infrastructure patterns we deploy make this kind of incremental migration safe and observable.

Operational Tooling for Safe Migrations

pg_repack for Lock-Free Operations

PostgreSQL's ALTER TABLE operations that rewrite the table (changing column types, adding constraints) acquire exclusive locks. pg_repack performs these operations online:

pg_repack --table users --only-indexes -d production_db

pg_repack --table orders -d production_dbMigration State Tracking

We track every migration through a state machine persisted in the database itself:

CREATE TABLE schema_migrations_v2 (

id SERIAL PRIMARY KEY,

migration_name VARCHAR(255) NOT NULL,

phase VARCHAR(20) NOT NULL CHECK (phase IN ('expand', 'migrate', 'contract')),

status VARCHAR(20) NOT NULL CHECK (status IN ('pending', 'running', 'completed', 'failed')),

started_at TIMESTAMPTZ,

completed_at TIMESTAMPTZ,

rollback_sql TEXT,

metadata JSONB DEFAULT '{}'

);Every expand phase records its corresponding contract SQL in rollback_sql. If anything goes wrong, the rollback path is documented and tested before the migration begins.

Monitoring During Migration

We instrument migrations with custom CloudWatch metrics and integrate them into the observability dashboards we maintain for every production system:

- Replication lag (for blue-green migrations)

- Write latency percentiles (to detect lock contention)

- Error rates by table (to catch constraint violations during backfill)

- Dual-write consistency (comparing record counts between stores)

This ties into our broader DevOps pipeline approach where migrations are automated pipeline stages, not manual runbook procedures.

Frequently Asked Questions

How long does a typical zero-downtime migration take?

It depends on the data volume and migration type. Schema changes using expand-contract complete in minutes. Engine migrations with DMS depend on dataset size — a 100GB database typically achieves full-load in 2-4 hours, with CDC keeping replication lag under one second. The actual cutover is near-instantaneous.

Can you roll back a zero-downtime migration?

Yes, and that's the primary advantage. During the expand phase, the old schema still functions. During blue-green migration, the blue database remains untouched until decommissioned. Dual-write patterns allow switching reads back to the primary at any time. Rollback paths are documented and tested before migration begins.

What about foreign key constraints during expand-contract?

Foreign keys require careful ordering. Add new foreign key references during the expand phase using NOT VALID initially, then validate in a separate step. This avoids full table scans under lock: ALTER TABLE orders ADD CONSTRAINT fk_user FOREIGN KEY (user_id) REFERENCES users(id) NOT VALID; followed by ALTER TABLE orders VALIDATE CONSTRAINT fk_user; — the validate step only acquires a SHARE UPDATE EXCLUSIVE lock.

How do you handle migrations in multi-region deployments?

Multi-region migrations add coordination complexity. We sequence migrations region by region, starting with the secondary region. If the migration causes issues, traffic fails over to the primary region running the original schema. Global tables (DynamoDB) or Aurora Global Database handle cross-region replication natively, but schema changes still require expand-contract to avoid version conflicts.

Does Aurora Serverless v2 simplify database migrations?

Aurora Serverless v2 eliminates capacity planning during migration — the database scales compute automatically to handle migration workloads alongside production traffic. Blue-green deployments with Aurora use AWS's native blue-green deployment feature, which handles replication, validation, and cutover with minimal operational overhead.

Discuss your project with Rutagon

Contact Us →