Application security testing in government programs isn't optional or periodic — it's a continuous CI/CD pipeline requirement backed by NIST 800-53 controls, FedRAMP ConMon obligations, and in DoD programs, DISA STIG requirements for application scanning. The question isn't whether to scan; it's how to make scanning effective, automated, and compliant with evidence retention requirements.

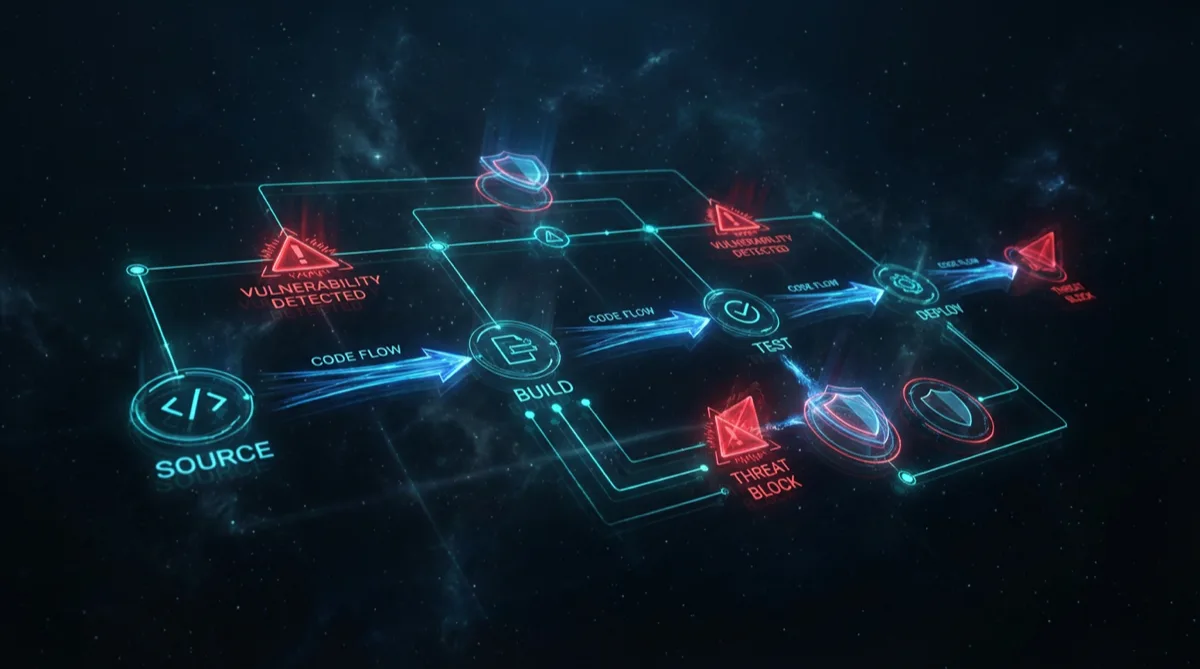

Here's how Rutagon integrates application security testing into production government CI/CD pipelines.

Why AppSec Testing in CI/CD Is Required (Not Optional)

Three overlapping requirements drive continuous AppSec testing in government programs:

NIST 800-53 SA-11 (Developer Testing and Evaluation): Requires organizations to implement security assessment procedures for software development that include SAST/DAST and penetration testing. In FedRAMP continuous monitoring, SA-11 is tested annually — the assessor expects to see evidence of automated security scanning, not just a statement that it happens.

RA-5 (Vulnerability Monitoring and Scanning): Requires vulnerability scanning of information systems and applications. FedRAMP specifies timed remediation SLAs: Critical CVEs within 15 days, High within 30 days, Moderate within 90 days. These SLAs are only achievable if scanning is automated and findings are tracked continuously.

DISA STIG for Application Security: DoD application security STIGs (specifically the Application Security and Development STIG, V4R11 and current) include requirements for SAST integration, dependency scanning, and documented vulnerability management procedures. Programs with DoD ATO requirements must demonstrate compliance with applicable STIG controls.

Static Application Security Testing (SAST)

SAST analyzes source code without executing it, identifying vulnerabilities like SQL injection, command injection, XSS, hard-coded credentials, insecure deserialization, and incorrect cryptographic usage.

Tools Rutagon uses in production:

Semgrep: Fast, customizable SAST that runs in CI/CD. Supports Python, TypeScript, Go, Java, and most common languages. The open-source ruleset covers OWASP Top 10; Semgrep Pro adds supply chain and secrets detection.

# .semgrep.yml — production SAST configuration

rules:

- id: hardcoded-aws-credential

patterns:

- pattern: |

AWS_ACCESS_KEY_ID = "AKIA..."

message: "Hardcoded AWS credential detected"

severity: ERROR

languages: [python, typescript, javascript]

- id: sql-injection-raw

patterns:

- pattern: |

cursor.execute("SELECT ... " + $INPUT)

message: "Potential SQL injection via string concatenation"

severity: ERROR

languages: [python]Bandit: Python-specific SAST, simpler than Semgrep but fast. Good for Python-heavy backend services.

ESLint Security Plugin: For TypeScript/JavaScript, the eslint-plugin-security package adds security-focused linting rules inline with regular code quality checks.

GitLab SAST Integration: GitLab CI has native SAST configuration that runs appropriate analyzers per language automatically:

# .gitlab-ci.yml — SAST integration

include:

- template: Security/SAST.gitlab-ci.yml

- template: Security/Secret-Detection.gitlab-ci.yml

- template: Security/Dependency-Scanning.gitlab-ci.yml

variables:

SAST_EXCLUDED_PATHS: "spec,test,tests,tmp"

# Report findings to Security dashboard

SAST_REPORT_TYPE: "sarif"

sast:

stage: test

artifacts:

reports:

sast: gl-sast-report.json

expire_in: 90 days # FedRAMP ConMon retention requirementDynamic Application Security Testing (DAST)

DAST tests running applications, simulating attacker behavior to find vulnerabilities that only manifest at runtime: authentication bypasses, broken access control, insecure API endpoints, session management flaws.

OWASP ZAP: The most widely used open-source DAST tool, with both passive scan (traffic analysis) and active scan (automated attack simulation) modes.

For API-heavy government applications (most modern systems), ZAP's OpenAPI support is valuable — you provide the API spec and ZAP automatically fuzzes all endpoints:

# DAST stage in CI/CD — ZAP API scan

dast-api-scan:

stage: security-test

image: owasp/zap2docker-stable

variables:

TARGET_URL: "https://$STAGING_API_URL"

API_SPEC: "./openapi.yaml"

script:

- zap-api-scan.py -t "$TARGET_URL"

-f openapi

-I # Informational alerts don't fail build

-J zap-report.json

-x zap-report.xml

--hook hook.py # Custom rules to reduce false positives

artifacts:

reports:

dast: gl-dast-report.json

paths:

- zap-report.json

expire_in: 90 days

environment:

name: staging

url: $TARGET_URLNuclei: Template-based vulnerability scanner that runs targeted checks against known CVEs and misconfigurations. Faster than full DAST for CI/CD integration, useful for checking specific vulnerability classes.

Container and Dependency Scanning

Beyond application code, the supply chain — base container images and third-party dependencies — must be scanned:

Trivy for container images:

container-scan:

stage: security-test

image: aquasec/trivy:latest

script:

- trivy image

--severity CRITICAL,HIGH

--exit-code 1 # Fail build on critical/high

--format sarif

--output trivy-results.sarif

$CI_REGISTRY_IMAGE:$CI_COMMIT_SHA

artifacts:

reports:

container_scanning: trivy-results.sarif

expire_in: 90 days

allow_failure: falseThe --exit-code 1 on --severity CRITICAL,HIGH blocks deployments with unpatched critical/high CVEs — implementing the FedRAMP ConMon SLA requirement at the pipeline level rather than relying on manual tracking.

SBOM generation: The Software Bill of Materials (SBOM) requirement is growing — DHS, OMB M-22-18, and DoD all reference SBOM requirements for government software. Generating an SBOM at build time and attaching it to release artifacts:

# Generate SPDX-format SBOM at build time

syft $CI_REGISTRY_IMAGE:$CI_COMMIT_SHA -o spdx-json=sbom.json

cosign attest --predicate sbom.json --type spdxjson $CI_REGISTRY_IMAGE:$CI_COMMIT_SHAVulnerability SLA Tracking for FedRAMP ConMon

FedRAMP ConMon requires tracking open vulnerabilities against defined SLAs:

| Severity | FedRAMP Remediation SLA |

|---|---|

| Critical | 15 days |

| High | 30 days |

| Moderate | 90 days |

| Low | 365 days |

A vulnerability found in a container scan that isn't remediated within the SLA becomes a POA&M item. Multiple overdue POA&M items in consecutive ConMon reports is a finding that can trigger an agency review of the authorization.

The tracking approach:

- Every scan result is ingested into a central vulnerability tracker (GitLab Vulnerability Management, DefectDojo, or similar)

- Each finding has a creation date and SLA deadline calculated automatically

- Items approaching SLA deadline trigger automated alerts to the engineering team

- Monthly ConMon reports are generated from the tracker, not manually assembled

This automation is the difference between sustainable ConMon and the quarterly fire drill that consumes an entire engineering team for a week.

Discuss AppSec testing setup for your government program →

Frequently Asked Questions

What is the difference between SAST and DAST?

SAST (Static Application Security Testing) analyzes source code without executing it — finding vulnerabilities by examining code patterns. DAST (Dynamic Application Security Testing) tests running applications by simulating attacks against live endpoints. SAST finds code-level vulnerabilities early in development; DAST finds runtime vulnerabilities (authentication flaws, API misconfigurations, session management issues) that only appear when the application is running. Both are required by NIST SA-11 for comprehensive application security testing.

Which SAST tool is recommended for Python/TypeScript government applications?

For Python, Bandit covers common Python security anti-patterns quickly; Semgrep with its Python ruleset provides deeper analysis and custom rule support. For TypeScript, ESLint with eslint-plugin-security covers most common patterns; Semgrep's TypeScript rules add coverage for framework-specific patterns (React XSS, Node.js security). GitLab CI's native SAST templates automatically run the appropriate analyzer per language. The combination of GitLab SAST template + Semgrep custom rules provides strong coverage for most Python/TypeScript government applications.

How often must applications be scanned under FedRAMP?

FedRAMP ConMon requires vulnerability scanning on a defined schedule: cloud infrastructure scanning monthly, web application (DAST) scanning at least annually (though more frequent is strongly recommended). In practice, Rutagon runs container and dependency scanning on every CI/CD pipeline run (every code push), infrastructure scanning weekly via automated AWS Inspector/Security Hub, and DAST quarterly against staging environments. The annual 3PAO assessment tests against scan evidence — infrequent scanning generates more findings.

What is an SBOM and why do government programs require it?

An SBOM (Software Bill of Materials) is a machine-readable inventory of all software components in an application — every library, dependency, and their versions. Government requirements for SBOM stem from the 2021 Executive Order on Improving the Nation's Cybersecurity (EO 14028) and subsequent OMB guidance. An SBOM enables rapid identification of which systems are vulnerable when a critical CVE is published — instead of manually auditing every application, you query the SBOM inventory. For FedRAMP and DoD programs, generating and maintaining SBOMs is increasingly a contractual requirement.

How does AppSec testing integrate with the ATO process?

AppSec testing evidence supports multiple NIST 800-53 controls required for ATO: SA-11 (Developer Security Testing), RA-5 (Vulnerability Scanning), SI-2 (Flaw Remediation). When a 3PAO conducts a FedRAMP assessment, they'll request evidence of SAST/DAST execution, tool configurations, scan reports, and how findings are tracked through remediation. Systems with mature, automated AppSec pipelines that generate clean evidence artifacts have smoother assessments — fewer findings, more credible control implementations, and more straightforward remediation narratives.

Discuss your project with Rutagon

Contact Us →